ONNX Runtime

Open-source runtime for ONNX models with hardware-specific optimizations for CPUs, GPUs, and edge devices. Supports inferencing and training across multiple ML frameworks.

ONNX Runtime is a high-performance inference and training engine for machine learning models in the Open Neural Network Exchange (ONNX) format. Developed by Microsoft and released under the MIT license, it enables developers to deploy models trained in PyTorch, TensorFlow, scikit-learn, and other frameworks across diverse hardware targets with minimal code changes.

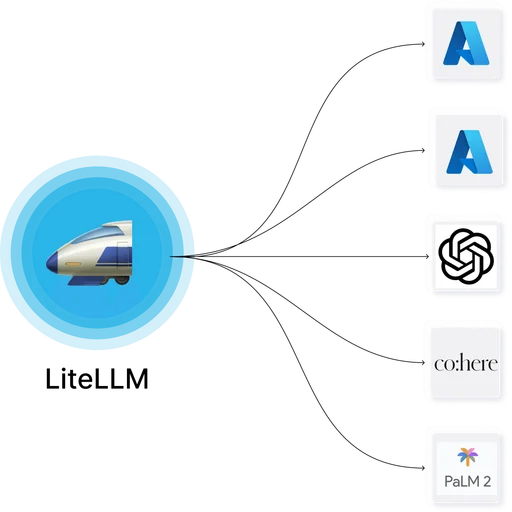

The runtime abstracts hardware complexity through a pluggable execution provider architecture. CPU inference uses optimized kernels from the Intel Math Kernel Library or Arm Compute Library. GPU acceleration is available via CUDA, DirectML on Windows, and TensorRT on NVIDIA hardware. Edge and mobile deployments leverage CoreML on Apple devices, the Qualcomm Neural Network SDK on Android, and WebGPU for browser-based inference.

ONNX Runtime powers production workloads at scale for companies including Adobe, Autodesk, Hugging Face, NVIDIA, Oracle, and Teradata. It is the default inference engine for ONNX models in Azure Machine Learning and integrates with the Olive model optimization toolchain for quantization, compression, and hardware-specific tuning.

Limitations

- Model conversion from PyTorch or TensorFlow to ONNX may not support all operators or dynamic shapes, requiring manual graph adjustments for complex architectures.

- Execution providers vary in operator coverage; a model optimized for CUDA may fall back to CPU for unsupported ops, creating performance cliffs.

- Quantization and optimization require expertise: INT8 or FP16 conversion can degrade accuracy significantly without careful calibration.

- Training support (ORT Training) is limited compared to native PyTorch or TensorFlow, with fewer optimizers and limited support for distributed training strategies.

- WebAssembly and WebGPU backends have larger bundle sizes and longer initialization times compared to native mobile inference SDKs.

ONNX Runtime

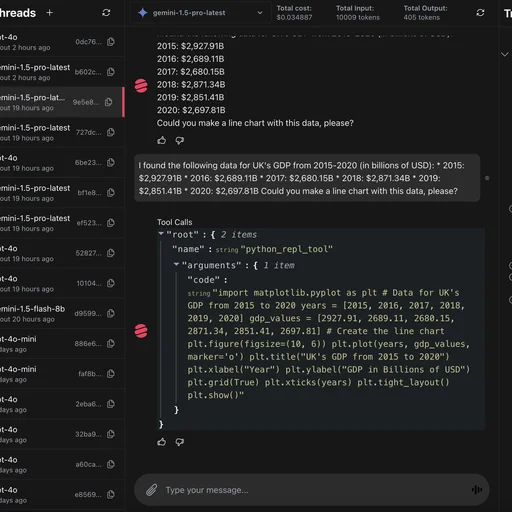

ONNX RuntimeONNX Runtime natively supports models exported from PyTorch via the ONNX format. PyTorch models can be converted to ONNX using torch.onnx.export() and then optimized and deployed through ONNX Runtime for production inference across diverse hardware targets.

ONNX Runtime

ONNX RuntimeONNX Runtime supports TensorFlow models converted to ONNX format through the tf2onnx converter. This enables TensorFlow-trained models to leverage ONNX Runtime's hardware acceleration and cross-platform deployment capabilities.