LiteLLM

Python-based AI gateway from BerriAI that proxies 100+ LLM providers behind one OpenAI-compatible API with virtual keys, hard-enforced budgets, cost dashboards, and an MCP federation endpoint. Core is MIT; SSO, JWT-to-key mapping, and audit retention sit in LiteLLM Enterprise.

LiteLLM is a Python SDK and proxy server from BerriAI that exposes a single OpenAI-compatible API over 100+ LLM providers. It runs as a Docker container backed by Postgres and Redis, with a built-in admin UI for managing keys, budgets, and routing.

What it does

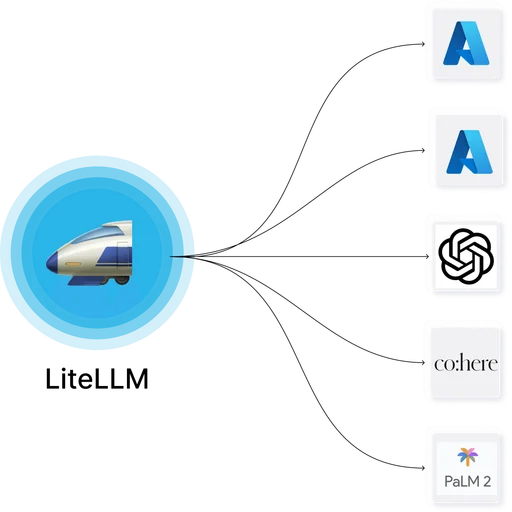

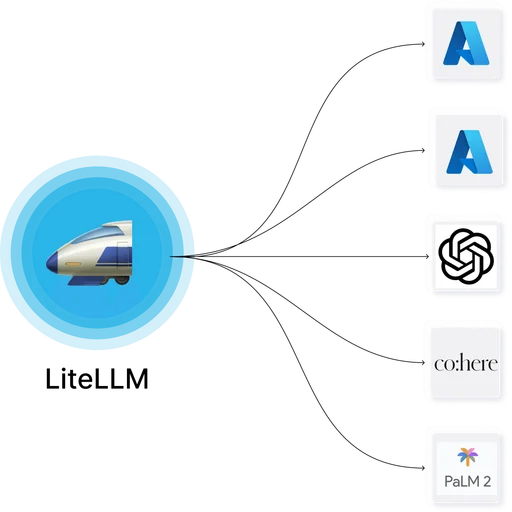

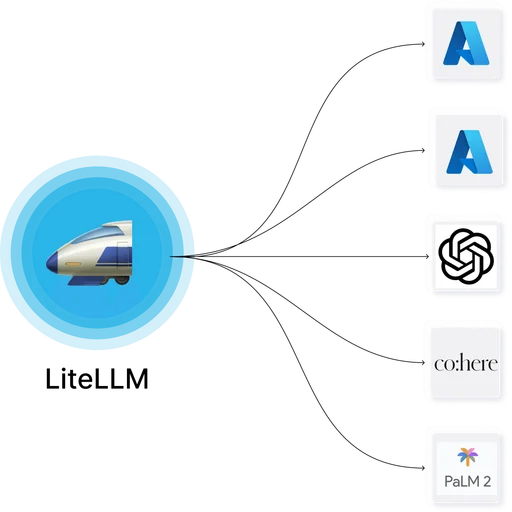

LiteLLM sits between applications and LLM providers, translating requests to and from each provider's native format. A config file declares providers, model aliases, routing groups, and failover chains. Clients hit LiteLLM with an OpenAI-compatible payload and a virtual API key, and LiteLLM handles provider selection, retries, rate limiting, cost accounting, and logging.

Providers supported include OpenAI, Anthropic, Google Gemini, Azure OpenAI, AWS Bedrock, Vertex AI, Cohere, Mistral, DeepSeek, Ollama, vLLM, and any endpoint that speaks the OpenAI chat completions protocol.

Where it fits

Teams use LiteLLM as the central control point for internal LLM traffic: issuing virtual keys to employees or services, enforcing per-key budgets at the API layer, aggregating cost and usage data for finance, and providing a single endpoint for IDE assistants, chat UIs, and internal applications. Since v1.78 (late 2025) it also federates upstream MCP servers — stdio, SSE, and streamable-HTTP transports — under a /mcp endpoint with per-virtual-key tool ACLs.

Licensing and commercial tier

Core is MIT. BerriAI offers a commercial LiteLLM Enterprise tier with admin-UI SSO/SAML, JWT-to-virtual-key automatic mapping, audit log retention with S3 export, some secret-manager integrations, and additional guardrails. Deployments that need SSO on the admin UI without Enterprise commonly front the UI with an OIDC reverse-proxy (oauth2-proxy, Pomerium) via Traefik's ForwardAuth.

Deployment

Single Docker container plus Postgres plus Redis. Runs on Docker, Docker Swarm, and Kubernetes (Helm charts provided). Release cadence is weekly and config schemas occasionally change across minor versions — production deployments pin to a specific image tag.

Limitations

- Config-breaking changes across minor versions require careful upgrade testing.

- Postgres schema migrations run at container startup; use a dedicated schema and DB role.

- Provider coverage is broad but not every exotic or regional provider is first-class — many are reached via their OpenAI-compatible endpoint rather than a native integration.

- Admin UI is functional but denser than the average SaaS dashboard; new operators take time to find settings.

- MCP gateway documentation is in the docs site but not yet prominent on the marketing site — expect to read

/docs/mcpand/docs/mcp_controlfor the current feature set.

LiteLLM

LiteLLM Portkey Gateway

Portkey GatewayTwo of the most widely used general-purpose OSS LLM gateways, differing in runtime (Python vs Node/Go), provider catalog, and scope of features in the commercial tier.

LiteLLM

LiteLLM Higress

HigressLiteLLM and Higress both combine LLM gateway and MCP functionality under one binary. LiteLLM is Python-based with an open-core commercial tier; Higress is Envoy-based Apache 2.0 from Alibaba with a Chinese-primary documentation set.

LiteLLM

LiteLLM Bifrost

BifrostLiteLLM and Bifrost both cover LLM gateway plus MCP territory. LiteLLM is Python-first with mature tooling and an open-core commercial tier; Bifrost is Go-first Apache 2.0 from Maxim AI with a lighter operational footprint and SAML as a commercial feature.

LiteLLM

LiteLLM ContextForge

ContextForgeLiteLLM and ContextForge commonly pair in deployments that need both a governed LLM gateway (LiteLLM) and a dedicated MCP federation tier (ContextForge) with RBAC, plugin policy, and audit retention.

LiteLLM

LiteLLM Langfuse

LangfuseLiteLLM sends traces directly to Langfuse via a built-in callback, giving teams rich trace analysis, prompt management, and evaluation on top of the gateway layer without application-level instrumentation.