LangDB

AI gateway from LangDB implemented in Rust, with multi-provider routing, virtual keys, semantic caching, and structured observability. Published under the Elastic License v2 — source-available with a restriction on managed-service redistribution.

LangDB is an AI gateway from the LangDB team that routes LLM traffic across providers with structured observability, semantic caching, and virtual-key management. The gateway ships as a Rust binary and Docker image and has been rebranded several times (LangDB, then vLLora) as the project evolved.

What it does

LangDB exposes OpenAI-compatible endpoints for OpenAI, Anthropic, Google, Azure, AWS Bedrock, and other OpenAI-compatible providers, with routing, retries, and fallbacks. A virtual-key system scopes usage per user or team with cost tracking. Semantic caching uses embeddings to deduplicate similar requests and return cached responses.

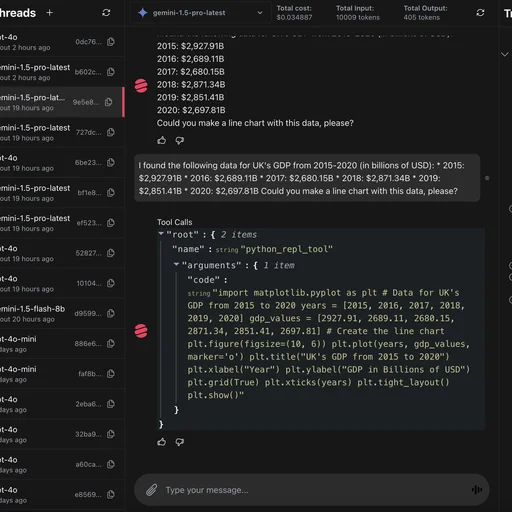

Structured telemetry captures every inference with token counts, latency, cost, and metadata, and the gateway includes MCP client support for calling MCP tools inside completions.

Where it fits

Deployments that want the gateway and observability layers combined in one tool, with semantic caching as a first-class feature. The Rust runtime keeps the operational footprint small.

Licensing

The gateway is published under the Elastic License v2 (ELv2), a source-available license that permits internal use and modification but restricts offering the software as a managed service or reselling it. ELv2 is not an OSI-approved open-source license.

Deployment

Docker image on Docker Hub. Newer vLLora-branded builds publish compose references as those stabilize.

Limitations

- Project has undergone rebranding (LangDB to vLLora); documentation, image tags, and feature parity can lag the latest release.

- ELv2 prohibits redistribution as a managed service — relevant for teams planning to bundle LangDB into a customer-facing SaaS offering.

- MCP support is client-side; it does not federate upstream MCP servers behind a unified endpoint.

- Virtual-key depth and governance tooling are less mature in the self-hostable version than in the hosted product.