Langfuse

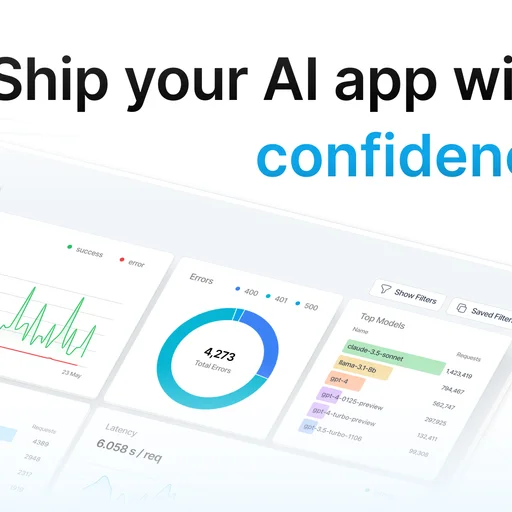

MIT LLM engineering platform from Langfuse for tracing, prompt management, evaluation, and datasets, captured via SDK instrumentation or gateway integration. Core including OIDC SSO is in OSS; advanced RBAC, audit retention, and SCIM sit in the Enterprise tier.

Langfuse is an MIT-licensed LLM engineering platform providing distributed tracing, prompt management, evaluation, and dataset tooling for teams building LLM-powered applications. The platform captures LLM usage via SDK instrumentation or gateway integration rather than proxying traffic itself.

What it does

Langfuse records each LLM call as a trace span with token counts, latency, cost attribution, and arbitrary metadata, assembling them into nested trace trees that mirror application call stacks. Prompt management supports versioning, labels, and remote prompt loading so prompt edits do not require code deploys. The evaluation framework runs offline batch evaluations and online LLM-as-judge scoring against specified datasets.

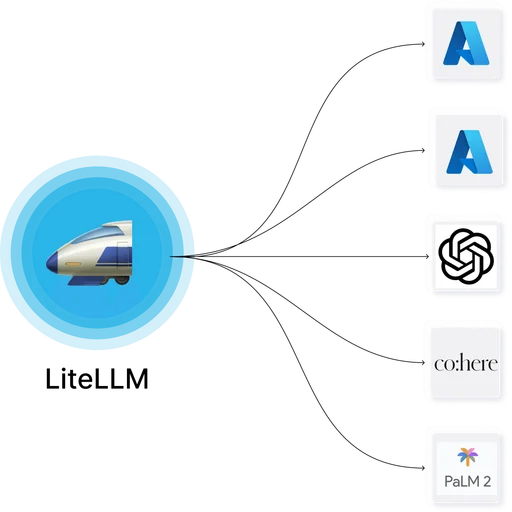

Native SDKs exist for Python, TypeScript, and Java. OpenTelemetry integration feeds traces into existing observability stacks. Direct integrations with LiteLLM, Portkey, LangChain, LlamaIndex, and other orchestration frameworks capture traces without manual instrumentation.

Where it fits

Teams pairing Langfuse with an LLM gateway for rich trace analysis and prompt management, or running Langfuse directly against application code that uses provider SDKs without a gateway.

Licensing and commercial tier

Core tracing, prompt management, evaluation, dataset tooling, and OIDC SSO are MIT in the self-hostable repo. Langfuse Cloud is a hosted option. Enterprise-tier features cover advanced RBAC, audit log retention policies, SCIM provisioning, and enterprise-grade support.

Deployment

Docker Compose reference stack with Postgres and ClickHouse for trace storage. Helm chart available for Kubernetes. Operational footprint is comparable to other ClickHouse-backed observability tools.

Limitations

- Not a gateway — Langfuse captures via SDK or via integrations with a gateway; applications that do not instrument or route through an integrated tool will not appear in traces.

- ClickHouse dependency adds operational weight compared to Postgres-only tools.

- Some enterprise governance features (advanced RBAC, SCIM) sit in the commercial tier.

- Heavy SDK instrumentation path — teams that prefer zero-code-change telemetry typically get observability at the gateway layer (LiteLLM's Logs UI, Helicone, etc.) and add Langfuse only when they need its prompt management or evaluation depth.