Gazebo

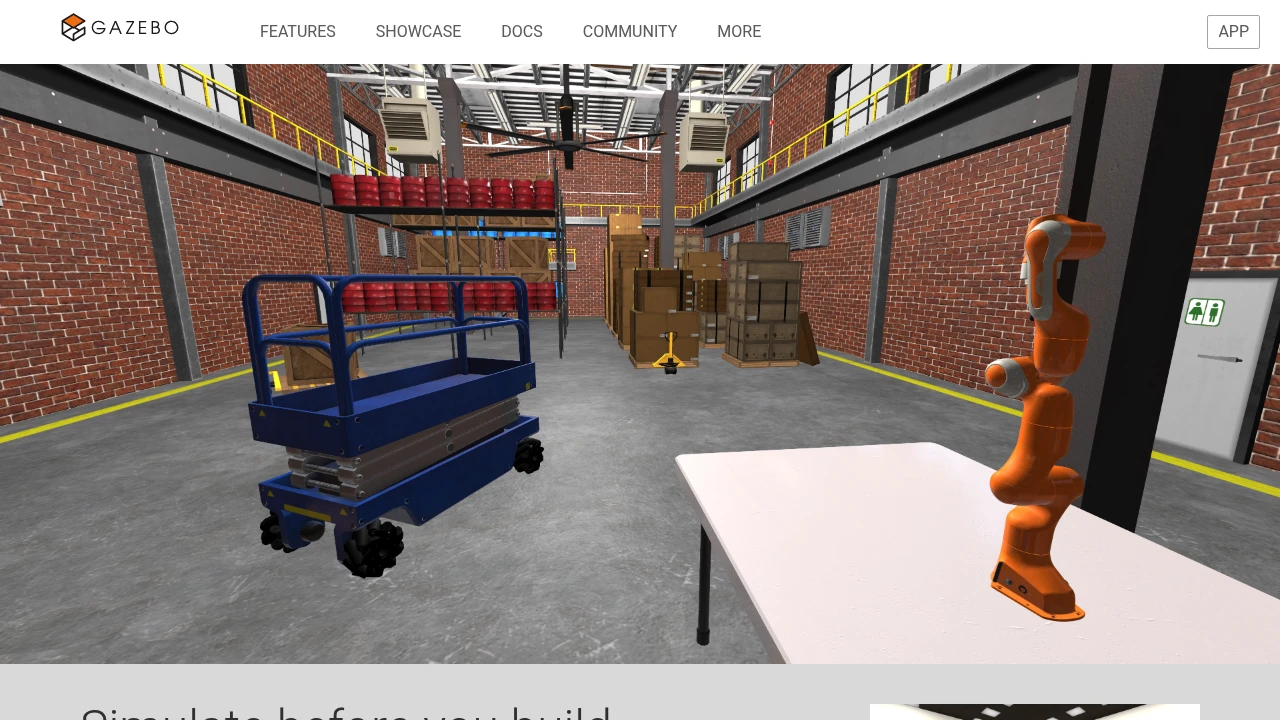

Open-source robotics simulator for modeling robots, sensors, and environments with pluggable physics and rendering engines. It is widely used for virtual prototyping, algorithm testing, and ROS 2 simulation workflows.

Gazebo is an open-source robotics simulator used to model robots, environments, sensors, and control systems before hardware is built or deployed. It combines a 3D simulator, SDF-based world description, plugin interfaces for physics and rendering, and tight ROS 2 integration through ros_gz packages and bridge tooling.

What it does

Gazebo lets teams run physics-based simulations for mobile robots, manipulators, and autonomous systems using configurable worlds and reusable models. It supports common robotics workflows such as sensor simulation, controller validation, collision testing, and continuous integration checks for robot software.

How it is typically used

Engineering teams use Gazebo to iterate on robot designs and behaviors before touching physical hardware. A common pattern is to build worlds in SDF, run the simulator headless or with the GUI, bridge data to ROS 2, and visualize the resulting state in tools such as RViz while testing planners, navigation stacks, or manipulation logic.

Architecture and extensibility

Gazebo is built as a modular simulator with separate libraries for simulation, transport, physics, rendering, and sensors. The platform exposes plugin interfaces for physics engines, rendering backends, GUI extensions, and custom simulation systems, which makes it suitable for research teams and product teams that need to customize environments or runtime behavior.

Deployment and operations

Gazebo is primarily deployed on-premise through Ubuntu packages, ROS vendor packages, source builds, or local development environments on Linux and macOS. It can run with both server and GUI processes, supports headless execution for automation and CI, and uses versioned releases such as Fortress, Harmonic, Ionic, and Jetty with different support windows.

Limitations

- Gazebo is not a turnkey industrial digital twin platform; it is a simulator toolkit, so teams still need to build their own world models, robot descriptions, and validation workflows.

- Windows support is still incomplete in the official documentation, and the macOS GUI is documented as less stable than Linux for some plugins and interactions.

- ROS and Gazebo version pairing matters: unsupported ROS 2 and Gazebo combinations can require non-default packages or source compilation.

- High-fidelity simulations with rich sensors, large worlds, or custom plugins can demand substantial CPU and GPU resources compared with lightweight robotics simulators.

Gazebo can publish ROS 2 data through ros_gz_bridge so RViz can visualize robot models, transforms, and trajectories from a running simulation.

Gazebo is commonly used alongside MoveIt to simulate manipulators, sensors, and collision scenes before deploying motion-planning pipelines to real robots.

- Kind

- Software

- License

- Open Source

- Website

- gazebosim.org ↗