Portkey Gateway

MIT AI gateway from Portkey AI that routes traffic across 1,600+ LLM endpoints through one OpenAI-compatible API. Combines routing, virtual keys, guardrails, caching, observability, and MCP gateway support in a Node.js / Go proxy that runs on Docker or Kubernetes.

Portkey Gateway is an MIT-licensed AI gateway from Portkey AI, implemented in Node.js and Go, that routes LLM traffic across 1,600+ provider endpoints through one OpenAI-compatible API. It combines routing, virtual keys, guardrails, caching, and observability in a single proxy that runs on Docker or Kubernetes.

What it does

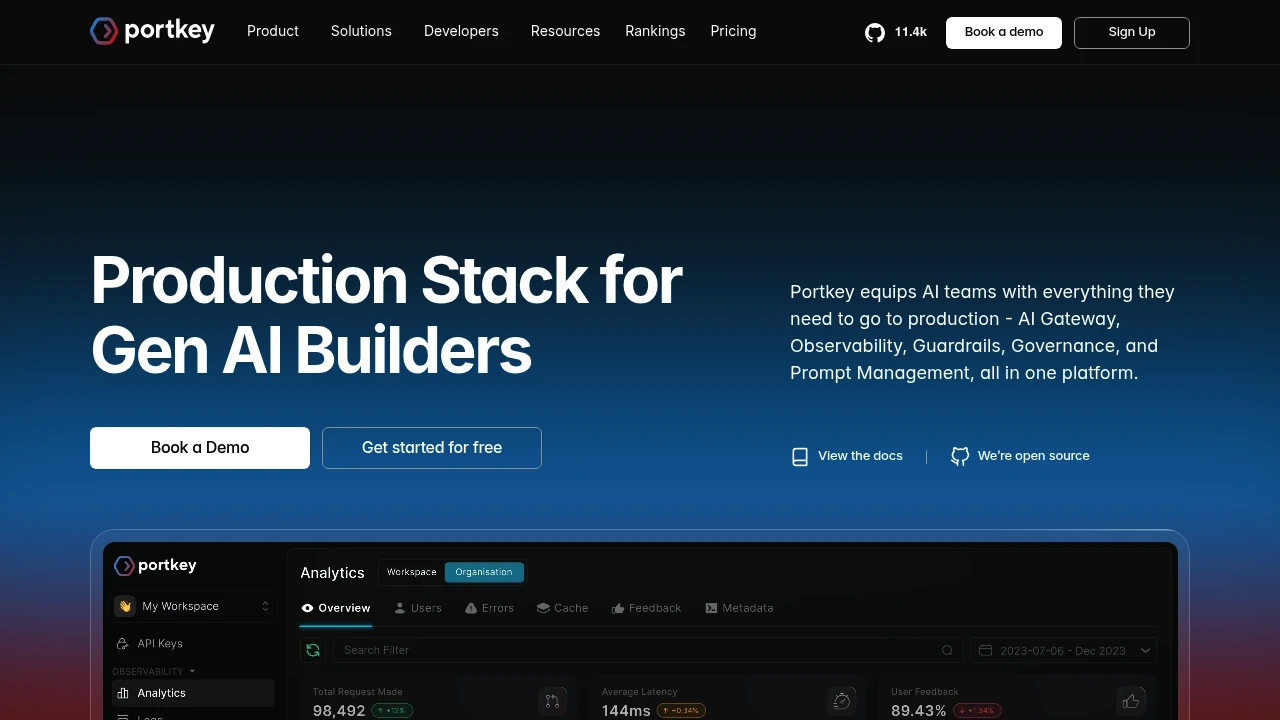

Portkey translates OpenAI-compatible requests into each provider's native format and back, handles retries and fallbacks, enforces rate limits and budgets via virtual keys, and logs every call for cost and trace analysis. The guardrails framework applies input and output checks — PII redaction, JSON schema validation, toxicity filtering, and LLM-as-judge evaluations — either inline or asynchronously.

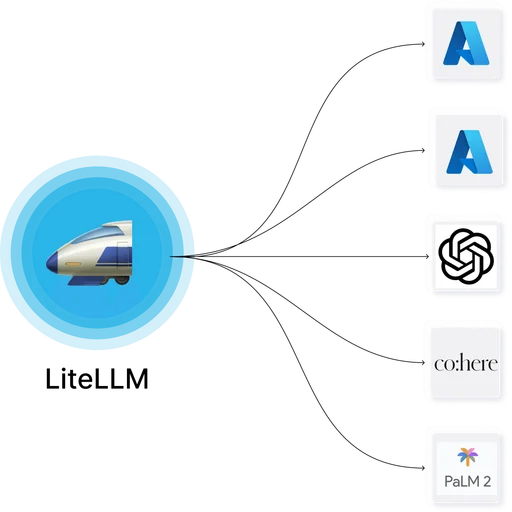

The 1,600-plus provider catalog includes OpenAI, Anthropic, Google, Azure, AWS Bedrock, Cohere, Mistral, Together, Groq, Fireworks, DeepSeek, Perplexity, and many regional and specialty providers.

Where it fits

Portkey is deployed as the central gateway in front of internal AI applications, IDE assistants, and customer-facing features. Virtual keys scope access per user, team, or workload. The gateway also handles MCP traffic (Gateway 2.0, 2026) with OAuth 2.1 client credentials for MCP authorization.

Licensing and commercial tier

The full gateway — routing, virtual keys, guardrails, and control plane — is MIT. Portkey AI operates Portkey Cloud as a managed hosting option with the same gateway under the hood plus additional operational tooling.

Deployment

Node.js or container runtime, plus optional Redis for caching and Postgres for persistence. Stateless gateway scales horizontally behind a reverse proxy. Helm charts and Docker Compose references are provided.

Limitations

- Provider count headline includes many variants of the same upstream APIs; the number of fundamentally distinct integrations is smaller.

- Release velocity is high and config changes occur across minor versions; pin container tags in production.

- Guardrails depth varies — rule-based checks are solid, LLM-judge guardrails require prompt tuning per use case.

- Node.js operational patterns may not match teams standardized on Python or Go runtimes.