⇄

⇄

LiteLLM Proxy with ContextForge

LiteLLM and IBM ContextForge occupy complementary layers in an AI control plane. They are not competitors — they commonly run side by side when both governed LLM traffic and MCP federation are requirements.

The pairing

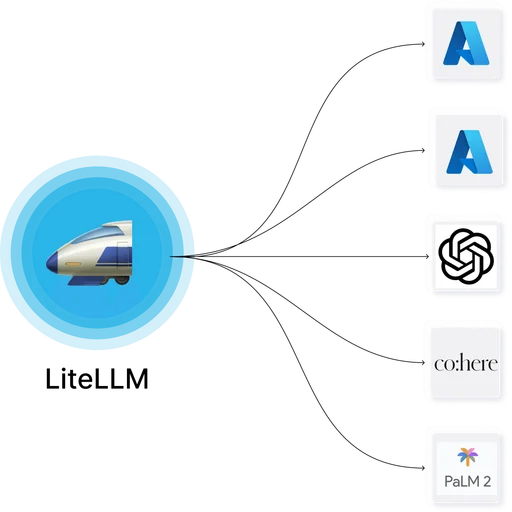

LiteLLM handles LLM gateway concerns: multi-provider routing, retries, fallbacks, virtual keys, hard budget enforcement, cost dashboards, and OpenAI-compatible API exposure. ContextForge handles MCP concerns: federating multiple upstream MCP servers (stdio, SSE, streamable-HTTP) behind a unified endpoint, Cedar-based RBAC and ABAC policy, plugin-based request and response processing, RFC 8707 resource indicators, and tamper-evident audit trails with retention policies.

When to run both

Teams pair the two when MCP surface area grows beyond what a single LLM gateway's embedded MCP support can manage cleanly — typically three or more upstream MCP servers with different trust levels, the need for Cedar-style policy expressions, or audit retention requirements beyond request-log granularity.

When one is enough

Teams with a small MCP footprint (one or two upstream servers, no need for Cedar policy) often use LiteLLM's built-in MCP gateway alone. Adding ContextForge brings another service, another Postgres schema, another Redis namespace, and another admin UI — justified only when the MCP tier merits its own governance layer.

Architecture

Clients authenticate once through the organization's OIDC IdP. LLM traffic from IDE assistants and internal applications flows through LiteLLM; MCP traffic from Claude Desktop, Cursor, and similar tools flows through ContextForge. The two services share the IdP and can share Postgres and Redis with schema and namespace isolation.