⇄

⇄

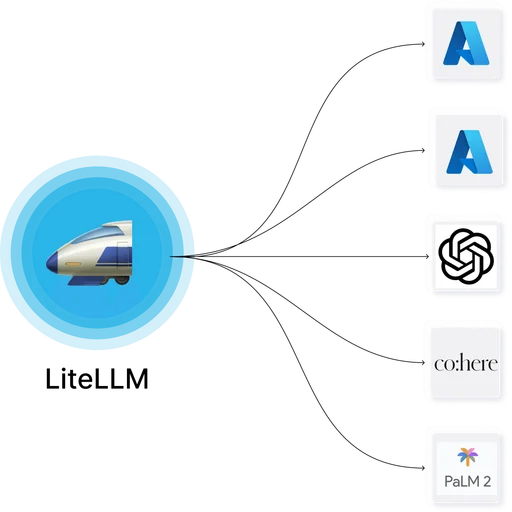

LiteLLM Proxy with Langfuse

LiteLLM and Langfuse occupy different layers of an LLM stack and are commonly deployed together. LiteLLM handles provider abstraction, routing, virtual keys, and cost enforcement at the gateway layer. Langfuse handles deep trace analysis, prompt management, evaluation, and dataset curation at the engineering layer.

Integration path

LiteLLM ships a built-in Langfuse callback. Enabling it in config.yaml under litellm_settings.callbacks: ["langfuse"] sends every request, response, token count, and cost record to Langfuse automatically — no application-level instrumentation required. Langfuse then provides trace trees, prompt version history, and evaluation workflows on top of that data.

When teams pair them

Teams that need richer trace analysis than LiteLLM's built-in Logs UI provides, or that want prompt management and offline evaluation alongside gateway governance. The pairing is zero-code-change for applications since LiteLLM handles the instrumentation.

Operational note

Both tools use Postgres, and Langfuse also uses ClickHouse for trace storage. Shared Postgres instances work with schema isolation; ClickHouse is typically dedicated to Langfuse.