Open WebUI

Self-hostable web UI for LLMs from the Open WebUI project. Multi-user chat with generic OIDC authentication, shared workspaces, document RAG, MCP client support, and Python-based Pipelines and Functions for custom extensions.

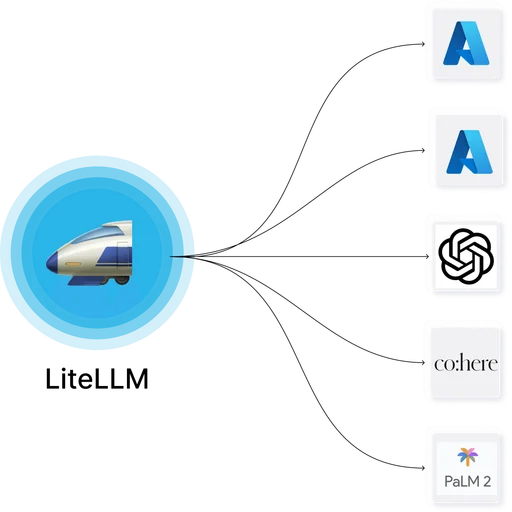

Open WebUI is a self-hostable web interface for large language models, originally built as a frontend for Ollama and now a full multi-user chat platform. It runs as a Docker container and supports direct connections to OpenAI, Anthropic, Ollama, and any OpenAI-compatible endpoint, with authentication, workspaces, RAG, and MCP tool integration built in.

What it does

Users sign in through a web UI, select a model from the providers the admin has configured, and chat. Conversations are saved per-user with tagging and search. Documents uploaded to a workspace are chunked, embedded, and made available to the LLM through RAG. Models can be grouped into shared catalogs with per-role access controls.

Authentication covers email-password, OAuth (GitHub, Google, Microsoft), and generic OIDC for providers like Keycloak, Zitadel, or Authentik. Workspaces let teams share prompts, models, and tools across groups of users. The MCP client lets a conversation invoke tools from registered MCP servers inline.

Extension model

Two extension mechanisms ship with the product:

- Pipelines — a separate service that intercepts requests and responses, letting operators add custom preprocessing, logging, filtering, or routing logic in Python.

- Functions — lightweight custom model-like endpoints defined in Python directly in the admin UI, useful for exposing internal APIs or transformations as selectable "models" in the chat interface.

A plugin marketplace and tool marketplace provide community-built extensions.

Deployment

Single Docker container backed by SQLite for small installs or Postgres for multi-user production. Runs on Docker, Docker Swarm, and Kubernetes. Optional Redis for rate limiting and background jobs. RAG uses ChromaDB by default with options to swap in Qdrant, Milvus, or pgvector.

Licensing

BSD-3-Clause. Open WebUI Enterprise is a paid support and consulting offering from the project; feature development happens in the open-source repository.

Limitations

- Provider, model, and pipeline configuration is stored in the database and managed through the admin UI or API, not in version-controlled config files.

- The Pipelines extension point runs as a separate service from the main Open WebUI container — teams using Pipelines operate two services instead of one.

- Streaming responses require long-lived WebSocket connections; reverse proxies in front of Open WebUI need to be configured for WebSocket timeouts and connection upgrades.

- Major version upgrades occasionally require database migrations; release notes document the steps.

- RAG depends on an embeddings model that must be configured separately (local via Ollama, or an API-based embedding provider).

Open WebUI

Open WebUI LibreChat

LibreChatOpen WebUI and LibreChat are both open-source self-hostable chat UIs with multi-provider support, authentication, and MCP client integration. Open WebUI is BSD-3 with a Pipelines and Functions framework and Postgres backend; LibreChat is MIT with conversation forking, presets, and a MongoDB backend.

LobeChat

LobeChat Open WebUI

Open WebUILobeChat and Open WebUI both target teams that want a self-hostable interface for working with multiple LLM providers, local models, and agent-like workflows. Buyers usually compare them when deciding between a broader agent workspace and a model-centric web UI for self-hosted use.

AnythingLLM

AnythingLLM Open WebUI

Open WebUIAnythingLLM and Open WebUI are both self-hostable AI chat interfaces that add document RAG, multi-provider model support, and agent-style tooling on top of local or hosted LLM backends. Buyers comparing private multi-user chat workspaces commonly shortlist both.