Bifrost

LLM gateway from Maxim AI written in Go, with OpenAI-compatible routing, virtual keys, budgets, MCP client and server, and a plugin-based governance pipeline. Apache 2.0 core with a commercial Bifrost Enterprise tier.

Bifrost is an LLM gateway from Maxim AI written in Go that exposes an OpenAI-compatible API over roughly a dozen providers. It runs as a static binary or Docker container and persists governance state in SQLite, Redis, or a file depending on configuration — no external database is required for core operation.

What it does

Bifrost routes traffic across OpenAI, Anthropic, Gemini, Azure, AWS Bedrock, Mistral, Cohere, Ollama, and OpenAI-compatible endpoints, with fallbacks, retries, and weighted load balancing. Virtual keys enforce per-user, per-team, and per-workload budgets and rate limits at the API layer. A plugin-based governance pipeline runs request guardrails, response transformations, and custom middleware inline against each request.

The MCP subsystem covers both roles in one process. As a client, Bifrost can invoke MCP tools inside completions. As a server, it aggregates upstream MCP servers and re-exposes them to other clients, with per-virtual-key tool filtering so different keys see different subsets of the tool catalog.

OpenTelemetry traces, Prometheus metrics, and structured logs cover observability. Semantic caching and prompt templating sit alongside the routing logic.

Licensing

Core gateway, virtual keys, budgets, MCP client and server, plugins, and observability are Apache 2.0. Maxim AI offers Bifrost Enterprise as a commercial tier covering SAML SSO, extended governance tooling, and hosted operational support.

Deployment

Single static Go binary or Docker container. State persists in SQLite, Redis, or a file. Runs on Docker Swarm, Kubernetes, or plain VMs.

Limitations

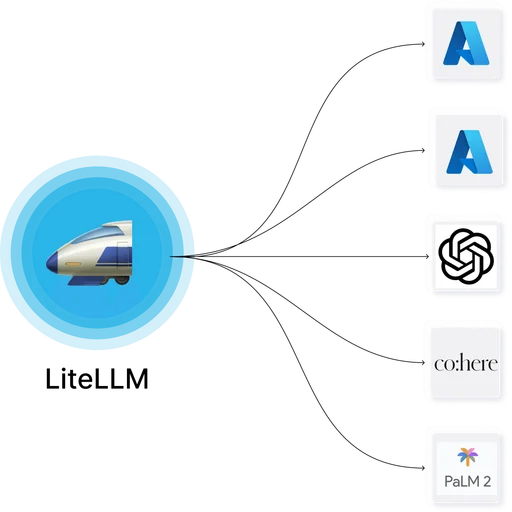

- Native provider catalog is smaller than LiteLLM's; providers outside the main catalog connect via the OpenAI-compatible upstream path.

- Plugin ecosystem is early — most plugins in production today are custom-written by the deploying team rather than installed from a community catalog.

- Project is younger than LiteLLM and Portkey, with a smaller contributor base and shorter release history.

- SAML SSO is in Bifrost Enterprise. Generic OIDC support in the OSS core has been evolving release-to-release; the exact capabilities depend on the target version's release notes.

LiteLLM

LiteLLM Bifrost

BifrostLiteLLM and Bifrost both cover LLM gateway plus MCP territory. LiteLLM is Python-first with mature tooling and an open-core commercial tier; Bifrost is Go-first Apache 2.0 from Maxim AI with a lighter operational footprint and SAML as a commercial feature.

Higress

Higress Bifrost

BifrostHigress and Bifrost both combine LLM gateway and MCP functionality in an Apache 2.0 binary. Higress is Envoy-based from Alibaba with built-in MCP hosting and OpenAPI-to-MCP conversion; Bifrost is Go-based from Maxim AI with dual-role MCP client plus server and a lighter operational footprint.