Helicone

Open-source LLM observability platform from Helicone that proxies requests to 20+ providers while capturing traces, cost data, prompts, and user analytics. Self-hostable via Docker Compose with ClickHouse, Postgres, MinIO, and a web UI.

Helicone is an open-source LLM observability platform from the Helicone team. It proxies requests to 20+ LLM providers — OpenAI, Anthropic, Gemini, Azure, Together, OpenRouter, and OpenAI-compatible endpoints — while capturing traces, cost data, prompts, and user analytics for the web UI and downstream systems.

What it does

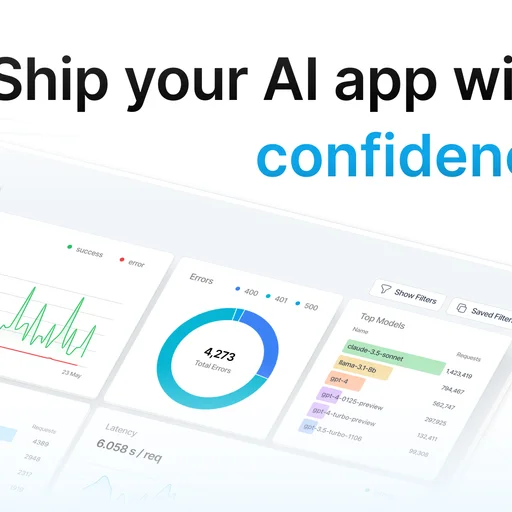

Every request routed through Helicone is recorded with token counts, latency, cost, request and response bodies, and custom metadata. The web UI presents cost analytics broken down by user, model, feature, or any custom property, and lets operators drill into individual traces for debugging. Prompt management supports versioning, A/B testing, and evaluation workflows, and the scoring framework measures output quality over time against custom rubrics.

Custom properties let applications tag each request with user, session, workflow, or experiment labels that flow into all downstream filters, dashboards, and alerts.

Integration

Two integration paths are supported: changing the base URL of the OpenAI SDK (or any OpenAI-compatible SDK) to route through Helicone's proxy, or using Helicone's native client library for additional control. A gateway routing layer on top of the observability proxy adds retries, fallbacks, caching, and rate limiting.

Licensing

Apache 2.0 core. Helicone Cloud is the hosted offering. Enterprise-tier features in the commercial product cover SAML SSO, advanced RBAC, and extended data governance; the core proxy, observability backend, dashboards, and generic OIDC are in the OSS repo.

Deployment

Docker Compose reference stack with the gateway, ClickHouse for analytics, Postgres, MinIO for object storage, and the web UI. Helm charts are available for Kubernetes.

Limitations

- ClickHouse dependency adds operational weight compared to Postgres-only tools.

- MCP federation is not part of the platform — Helicone exposes its own data as MCP resources but does not aggregate upstream MCP servers.

- Virtual-key budget enforcement is lighter than in gateways where governance is the primary feature; Helicone's budget and rate limit logic lives in the gateway routing layer rather than as a first-class wallet.

- The self-hosted reference stack has five moving parts (proxy, web, Postgres, ClickHouse, MinIO) — not the smallest footprint among comparable tools.