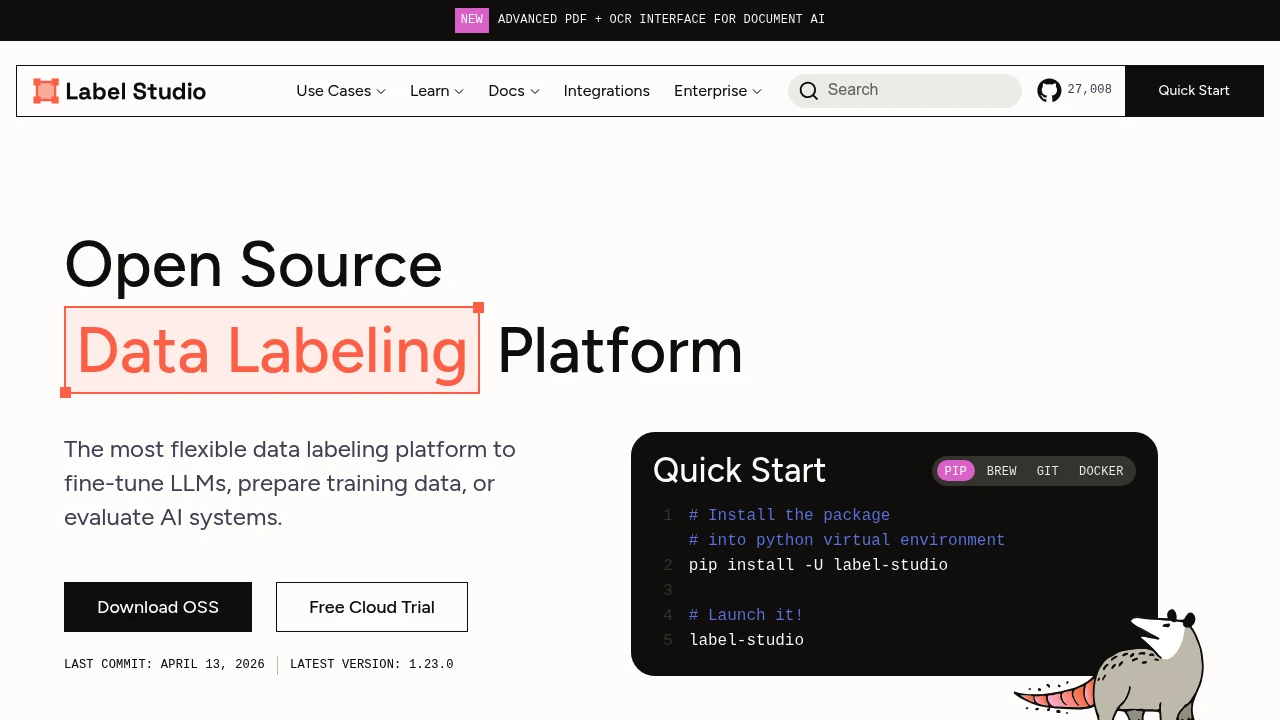

Label Studio

Open-source data labeling platform for multimodal machine learning workflows. Label Studio supports annotation for text, images, audio, video, time series, and LLM evaluation tasks with API, SDK, and storage integrations.

Label Studio is an open-source data labeling platform used to prepare, review, and export training and evaluation data across multiple modalities. Teams use it to run annotation projects for computer vision, natural language, speech, time-series, and generative AI workflows, either self-hosted or through the vendor’s cloud offering.

What it does

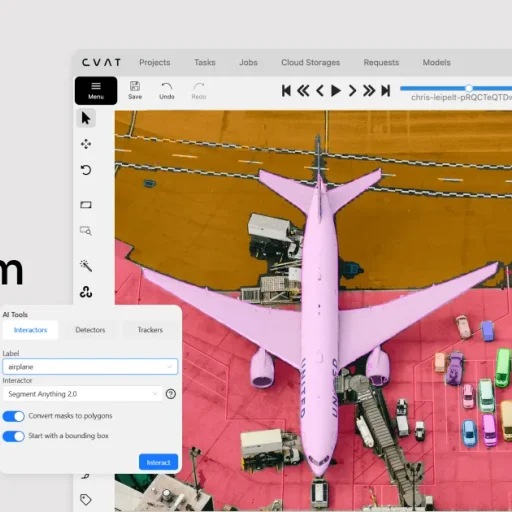

Label Studio provides configurable annotation interfaces, project-based task management, reviewer workflows, and export tooling for labeled datasets. Its documentation and repository emphasize broad modality coverage, including text, images, audio, video, documents, and time series, plus support for pre-labeling and model-assisted annotation.

How it fits into AI pipelines

The platform exposes a REST API, Python SDK, webhooks, and storage connectors so teams can import tasks, sync external datasets, and export annotation results into downstream pipelines. Official docs also describe source and target storage integrations for Amazon S3, Google Cloud Storage, Azure Blob Storage, Redis, and local storage, which makes it practical for teams that want annotation to sit alongside existing data infrastructure.

Deployment and operations

Label Studio can be installed with Docker, pip, poetry, or Anaconda, and the open-source project includes Docker Compose setups with PostgreSQL and Nginx for more durable deployments. The product also offers a cloud trial, so teams can start with hosted evaluation before deciding whether to run it inside their own environment.

Where it is strongest

Label Studio is strongest when a team needs one annotation system that can cover multiple data types instead of separate tools for text, image, and LLM evaluation work. Its configurable interfaces and ML backend hooks also make it useful when annotation workflows need custom schemas, pre-annotations, or human review around model outputs.

Limitations

- The open-source edition is an annotation system, not a full MLOps platform, so teams still need separate tools for experiment tracking, model training, and production deployment.

- Self-hosted production setups are more involved than lightweight single-binary tools because durable deployments typically use multiple services such as PostgreSQL, Nginx, and external object storage.

- Source storage sync is not fully continuous by default; documentation notes that newly uploaded data must be synced through the UI or API rather than appearing automatically in projects.

- Large media workflows can add backend overhead in proxy mode because on-prem deployments stream files in chunks and may require tuning proxy-related environment variables.

- While Label Studio supports many modalities, organizations focused only on high-volume computer vision annotation may prefer a more specialized interface built specifically around visual dataset operations.

Label Studio

Label Studio Datumaro

DatumaroLabel Studio is used to create and manage annotations, while Datumaro is used to transform, validate, and convert datasets between training formats. This makes Datumaro a practical downstream companion when labeled data from Label Studio needs format conversion or dataset processing.

Label Studio

Label Studio FiftyOne

FiftyOneLabel Studio handles human annotation and review, while FiftyOne is used to inspect, visualize, and curate datasets and model outputs. Together they support a workflow where teams label data in Label Studio and analyze dataset quality or model behavior in FiftyOne.

- Kind

- Software

- License

- Open Source

- Website

- labelstud.io ↗